Test automation is an integral part of modern software development, primarily focused on enhancing the efficiency and effectiveness of testing processes. But for some reason, after decades of effort, success is still anything but obvious. I think anyone can mention numerous examples where the expected or hoped-for success did not materialise.

You would expect that after all this time, we should be quite skilled at it. Yet, I believe there are a number of reasons that prevent us from fully harnessing the true power of test automation.

Firstly, there is a vast number of tools available, and the pace at which these tools develop and surpass each other is impressively high. Just keeping up with these changes could be a full-time job. But aside from that, we also need to ensure that we don’t end up using a new tool every few months, which we then have to learn, implement, and manage.

It’s not by chance that I’m focusing on the tools here. This is exactly one of the reasons our success is often jeopardised: an excessive focus on the tools! Test automation encompasses so much more than just having a tool to avoid manual testing, yet training programmes almost always focus heavily on tool training.

Let’s Look Beyond the Tools

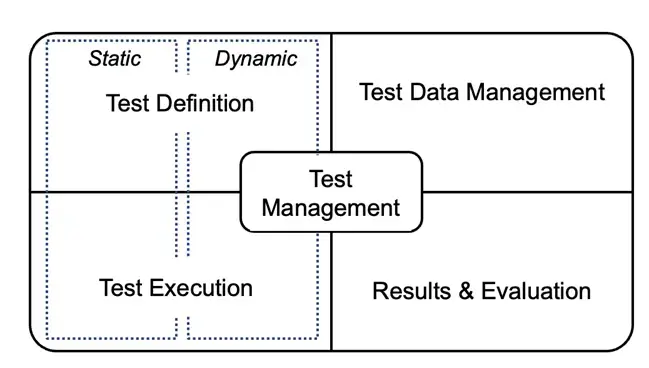

I am convinced that the likelihood of success significantly increases when we pay more attention to truly understanding what test automation entails. In test automation, you deal with a broad and diverse landscape, extending beyond just test definition and execution. When we look at the whole picture and include test management, test data management, and results and evaluation, all play an essential role. However, we are often not aware of this.

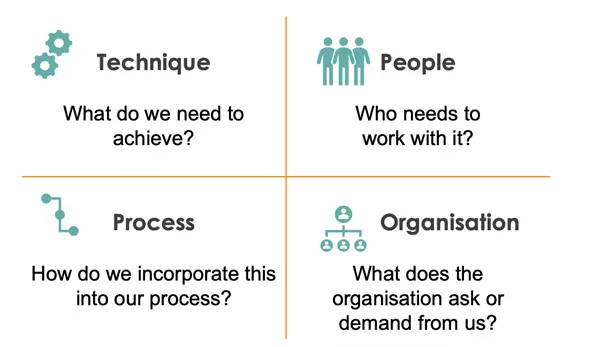

When we look beyond the tools themselves, an entirely new world opens up: that of the relevant aspects impacting the implementation of test automation. Questions that then arise include:

- Which techniques are we working with and need to be supported?

- How do we optimally integrate the test (automation) process into existing processes?

- What does the organisation itself demand and require from us?

- How does the human factor influence the choices we make?

If we are aware of which questions to ask and how to integrate the answers into our architecture, we increase the likelihood of a successful test automation implementation. Now, the challenge remains… we won’t gain the necessary knowledge if we continue to focus on learning yet another tool.

Let’s Make a Change: Learn About Test Automation!

Fortunately, there are now training programmes available specifically aimed at teaching that knowledge. Certified Test Automation Professional (CTAP) is a good example of this, and I am very enthusiastic about it. The CTAP programme is designed to educate its participants on all critical aspects of test automation.

This training programme focuses on things like:

- Different areas of application for test automation

- Aspects that impact test automation

- Test architecture and tool selection

- The impact of AI on test automation

- Principles and methodologies

- And much more…

It balances important theoretical knowledge with useful practical skills. Armed with that expertise, you will undoubtedly be able to ask the right questions and uncover the necessary answers.

Dutch organisations like the Tax Office (Belastingdienst) and the Social Security Agency (UWV) are already embracing this training and the associated certification, and they are seeing many positive effects. They cite increased quality and a higher level of collaboration around test automation as major advantages. Additionally, it helps to have a common frame of reference and a clear understanding of what the world of test automation entails.

Ready to join the change and significantly increase the chances of success for test automation? Then dive further into the Certified Test Automation Professional programme or sign up for one of our training sessions! More information can be found here – Certified Test Automation Professional CTAP 2.0

Author

Frank van der Kuur

Frank embarked on his IT journey early in life, with a significant portion of his career devoted to the field of testing. Throughout this time, he has supported various organisations in improving the quality of their products. By bridging the gap between process and technology, he strives to enhance the efficiency of testing efforts.

Alongside his role as a practical consultant, Frank is also an enthusiastic trainer. He takes pleasure in helping his peers improve through training on testing tools, the testing process, or on-the-job coaching.

BQA are exhibitors in this years’ AutomationSTAR Conference EXPO. Join us in Amsterdam 10-11 November 2025.